Crypto Storage and On-Chain Verifiable Cloud in the AI Era: An Objective Review and Structural Analysis of Major Projects (2025–2026)

Introduction: Why AI Is Re-Elevating the Role of Storage in Crypto Infrastructure

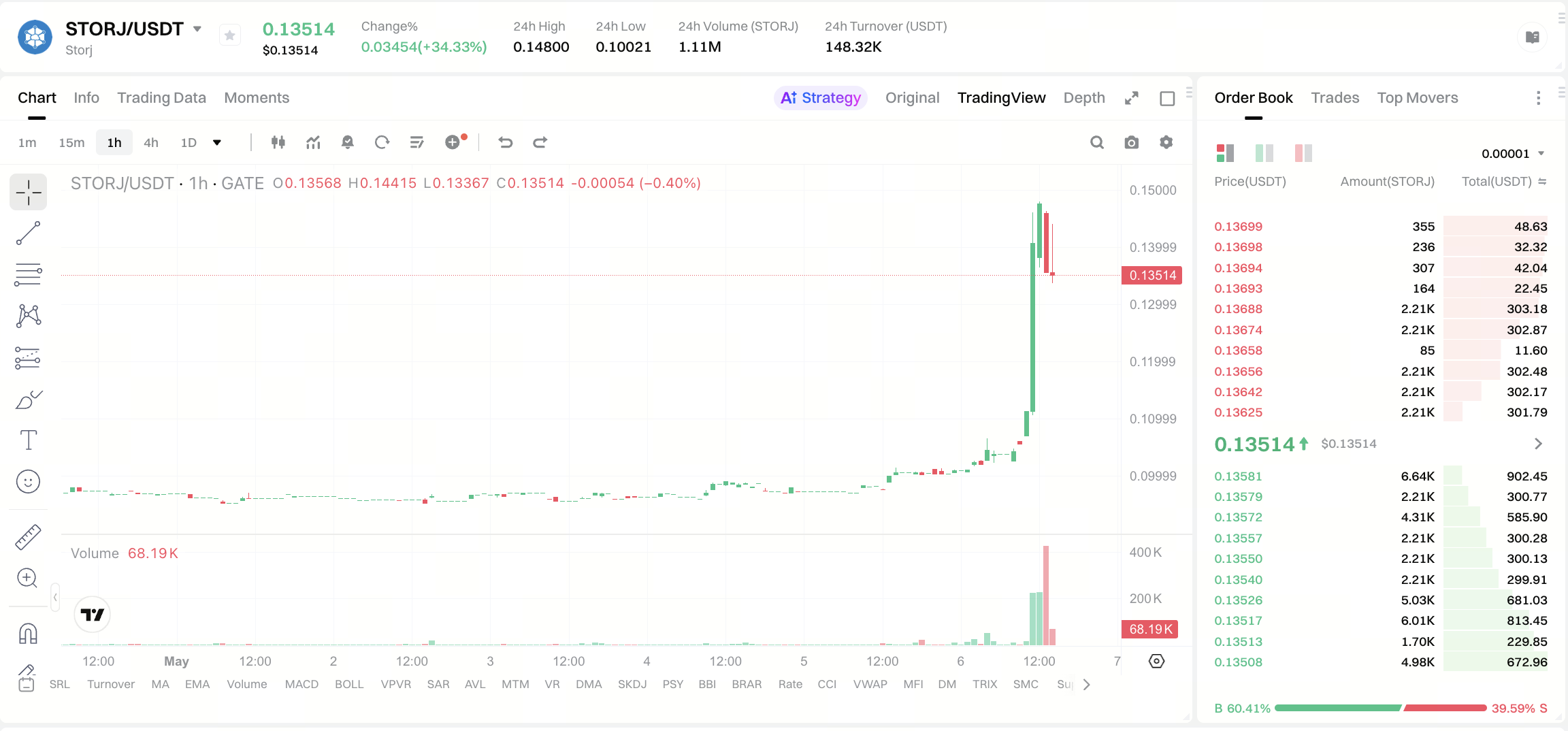

Image source: Gate Market Page

By 2026, storage and outbound traffic pricing—both on the cloud and self-hosted sides—are steadily rising. Combined with the explosive growth of AI training datasets, vector databases, and inference logs, "unit price per GB" and "cross-region synchronization fees" are once again top-of-mind in weekly reports for CFOs and infrastructure leaders. During this period, market sentiment is highly sensitive to "alternative supply": decentralized storage assets such as STORJ have seen sharp short-term increases, rapidly turning long-standing structural issues into trading hotspots. The real question is not the daily price swings, but rather: as enterprises pay higher bills for long-term retention of models and Agents, why does the market shift expectations toward on-chain, verifiable, or DePIN-based storage solutions?

It's essential to clarify: "storage" in the crypto context is not a single product form. It may refer to permanent web archiving and economic security models, near real-time object storage and hot-cold tiering, or simply a module within a stack (alongside Hashrate markets and Data Availability, DA). The following sections categorize projects and roadmaps by problem type, avoiding the conflation of different technology layers into a single "storage token" narrative and separating price volatility from considerations like availability, SLA, compliance, and long-term TCO.

Layered Demands: Training Data, Model Assets, Agent State, and Compliance Auditing

Before diving into specific projects, use the following layered framework to align focus areas.

-

Version freezing for training and evaluation data

- Is long-term immutability and public auditability via a timestamp chain required?

- Is a higher one-time write cost acceptable for lower downstream dispute risk?

-

Lifecycle management for model weights and intermediate outputs

- Is the focus on archiving and backup (low-frequency reads) or online inference loading (latency-sensitive)?

- Is on-chain contract control for renewals, access whitelists, and settlement needed?

-

Agent and session state

- Is programmable authorization needed (e.g., by caller, task, or time window)?

- For high-frequency state updates, KV or mutable layers are often more practical than pure permanent blobs.

-

Enterprise procurement and compliance

- Buyers often ask about SLA, region, encryption and Key Management, verifiable proof formats, and outbound traffic billing.

- Decentralized solutions that focus solely on node count but lack measurable SLOs will struggle with enterprise adoption.

These four aspects determine whether the evaluation focus should be on Arweave-like permanent layers, Filecoin Onchain Cloud-style verifiable clouds, programmable object storage like Walrus/Akave, or full-stack modules such as 0G, which integrates storage into an AI-native chain architecture.

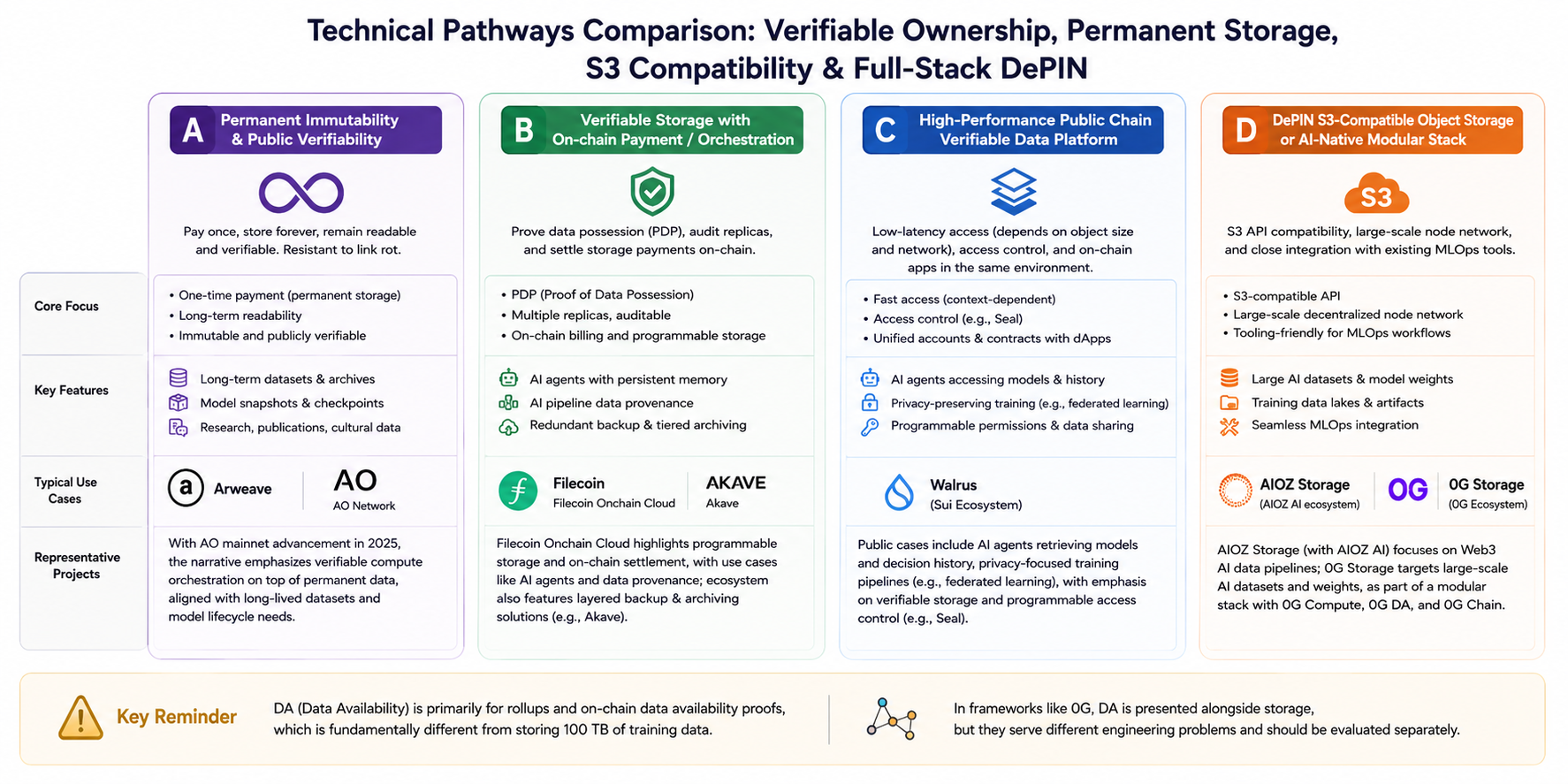

Technical Route Comparison: Verifiable Possession, Permanent Storage, Object Storage Compatibility, and Full-Stack DePIN

For a side-by-side comparison, these routes can be abstracted into four categories (with some overlap but distinct narrative focus):

Route A: Permanent Immutability and Public Reproducibility

- Keywords: one-time payment, long-term readability, combating link rot.

- Example: Arweave. After the AO mainnet launches in 2025, the ecosystem narrative emphasizes verifiable computation orchestration atop permanent data, meeting the need for long-term dataset and model snapshot alignment.

Route B: Verifiable Storage with On-Chain Payment/Contract Orchestration

- Keywords: PDP (Proof of Data Possession), multi-replica auditability, on-chain billing.

- Example: Filecoin's Filecoin Onchain Cloud. Public documentation highlights programmable storage and on-chain settlement, with scenarios like AI Agent-managed persistent storage and AI pipeline data provenance. The ecosystem also features layered backup and archiving with products like Akave.

Route C: Verifiable Data Platforms on High-Performance Public Chains

- Keywords: low-latency reads (depending on object size and network), access control (e.g., Seal), unified accounts and contracts with on-chain apps.

- Example: Walrus (Sui ecosystem). Official and partner cases include AI Agent model and decision history storage, privacy-related training paths (like federated learning), with a focus on verifiable and programmable permissions.

Route D: DePIN-Enabled S3-Compatible Object Storage or AI-Native Modular Stack Component

- Keywords: S3 API, node network scale, seamless integration with existing MLOps tools.

- Examples: AIOZ Storage (positioned alongside AIOZ AI in the Web3 AI data pipeline); 0G Storage in the 0G documentation, described as the storage layer for large AI datasets and model weights, forming a modular stack with 0G Compute, 0G DA, and 0G Chain.

Important distinction: DA (Data Availability) primarily serves rollups and on-chain data availability proofs. Storing "100 TB of training data" is a different engineering challenge; however, in full-stack frameworks like 0G, DA and storage are presented together and should be evaluated separately.

Representative Project Overview (Classified by Route)

The following entries are based on public roadmaps and official blogs, not sorted by market cap or token performance, and do not constitute investment advice.

Permanent Layer: Arweave and AO Ecosystem

- Positioning: Focused on permaweb and long-term readability, ideal for model and dataset snapshots, open science, and censorship-resistant publishing.

- AI integration: More about evidence chains and reproducibility than guaranteed low-latency reads.

- Evaluation points: Write economics, gateway availability, and whether read paths depend on specific gateway providers.

Verifiable Cloud: Filecoin Onchain Cloud and Upper-Layer Products Like Akave

- Positioning: Productizes verifiable possession, replica strategies, and on-chain payment for enterprise backup, compliance archiving, and auditable pipelines.

- AI integration: Public materials highlight Agent automation for storage and training/inference pipeline provenance.

- Evaluation points: Dataset scale and client cases, engineering integration cost of proof tools, cross-region performance.

Verifiable Data Platform: Walrus

- Positioning: Built for verifiability, programmability, and privacy control (e.g., Seal), deeply integrated with the Sui app ecosystem.

- AI integration: Ecosystem partnerships cover Agent data lifecycle and privacy training collaborations.

- Evaluation points: Latency by object size, encryption and Key Management boundaries, integration depth.

DePIN Object Storage: AIOZ Storage and Others

- Positioning: S3-compatible, emphasizing node scale and low-friction migration.

- AI integration: Directly aligned with engineering practices such as dataset hosting and artifact distribution.

- Evaluation points: Fair cost comparison with centralized cloud requires same region, hot/cold tier, and egress assumptions.

Full-Stack Modular: 0G

- Positioning: Integrates storage, Hashrate, DA, and chain as modules under a unified deAIOS/AI L1 vision.

- AI integration: Documentation emphasizes high throughput, a storage layer for weights and logs, and a KV layer for embeddings and Agent state.

- Evaluation points: Whether the maturity of each module matches the most critical bottleneck (often Hashrate or data pipeline).

Other Frequently Mentioned but Non-Storage-Focused Projects

- For example, Fluence and other GPU/decentralized Hashrate projects: Often cited in "AI + DePIN" discussions, but should not be classified as storage infrastructure unless they explicitly offer large-scale object storage SLA.

Adoption Realities and Major Risks: Engineering, Economic Models, and Regulatory Compliance

Even with AI-aligned narratives, three main constraints remain for implementation:

-

Engineering Constraints: Latency, Consistency, and Toolchains

- Distributed systems often need extra middleware for small files, high QPS, cross-region sync, and resumable uploads.

- "Decentralization" does not automatically mean lower cost; total cost of ownership (TCO) for cold archiving and hot reads must be compared.

-

Economic Model Constraints: Token Incentives and Actual Payment

- Many networks incentivize both miners/nodes and end-users.

- Token price volatility affects provider retention, impacting long-term availability and service quality.

-

Compliance and Data Governance: Keys, Cross-Border, and Copyright

- AI datasets often involve copyright and personal information; on-chain verifiability does not resolve legal source issues by itself.

- Enterprise clients will ask about key custody, deletion rights, and data residency: there is inherent tension between permanent storage and the "right to be forgotten," which requires coordinated design between product and legal teams.

Conclusion: Align Expectations with Use Cases and Rely on Verifiable Evidence, Not Slogans

The "AI + Storage" narrative is trending, but true usability is determined by clarifying workloads: whether objects are for cold archiving or hot reads; SLOs for throughput and latency; how key and compliance responsibilities are implemented contractually; and whether token incentives are aligned with actual payments. The four-layered routes (permanent layer, verifiable cloud, on-chain ecosystem object storage, and full-stack modular storage) can coexist but are not interchangeable: the permanent layer is strong in long-term consistency and public replay; verifiable clouds excel in billing and orchestration; S3-compatible solutions lower migration costs; and full-stack modular approaches offer an all-in-one narrative but require maturity validation for each module.

The final filter is simple: first, check if verifiable usage and client cases support the narrative; then compare TCO and latency on an equal basis; and finally, discuss tokens and valuation. This approach minimizes common misconceptions, such as treating DA as a "corpus warehouse" or Hashrate projects as "storage infrastructure."

Disclaimer: This article compiles technical and industry information and does not constitute any form of investment advice. Details regarding mainnet phases, partners, and performance metrics may change with official updates. Please refer to the latest white papers, documentation, and audit disclosures from project teams.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?