If you don't understand AI by the end of this, the next decade will confuse you

Most people think AI is a chatbot.

I get it. You open ChatGPT, ask it to fix your email, and it does. Feels like magic. You walk away thinking you understand what's going on. But that's like swiping a credit card at a restaurant and thinking you understand how Visa makes money. You used the product. You didn't see the system.

I spent the better part of last year trying to figure out where the real money in AI actually flows, and the honest answer is that it took me embarrassingly long to stop looking at the wrong layer. I kept staring at ChatGPT and Claude and Gemini, the stuff you can touch, while $700 billion was being quietly deployed into infrastructure I couldn't even name. Chips I'd never heard of. Packaging technologies with acronyms that sound made up. Cooling systems. Power plants. Concrete is being poured in Texas, Iowa, and Hyderabad.

Nobody I know was talking about any of this a year ago. They are now.

This article is going to be long. If you don't have time right now, bookmark it and come back. I want to walk through the entire AI value chain, every layer, from the electricity that powers the data centres to the app on your phone, and I want to do it in a way that makes sense even if you've never read an annual report in your life.

I'll explain the jargon when I use it. I'm going to attach real numbers to every claim. And I'm going to be honest about the parts I'm still not sure about, because there are a few.

Let's begin.

I - The five-layer cake (and why nobody talks about the bottom four)

AI is infrastructure. Just like the internet, just like electricity, it needs factories. ~ Jensen Huang

The way most people understand AI goes something like this: a smart computer answers questions.

That's like saying the internet is "a place where you watch videos." Technically not wrong. But it misses the entire point.

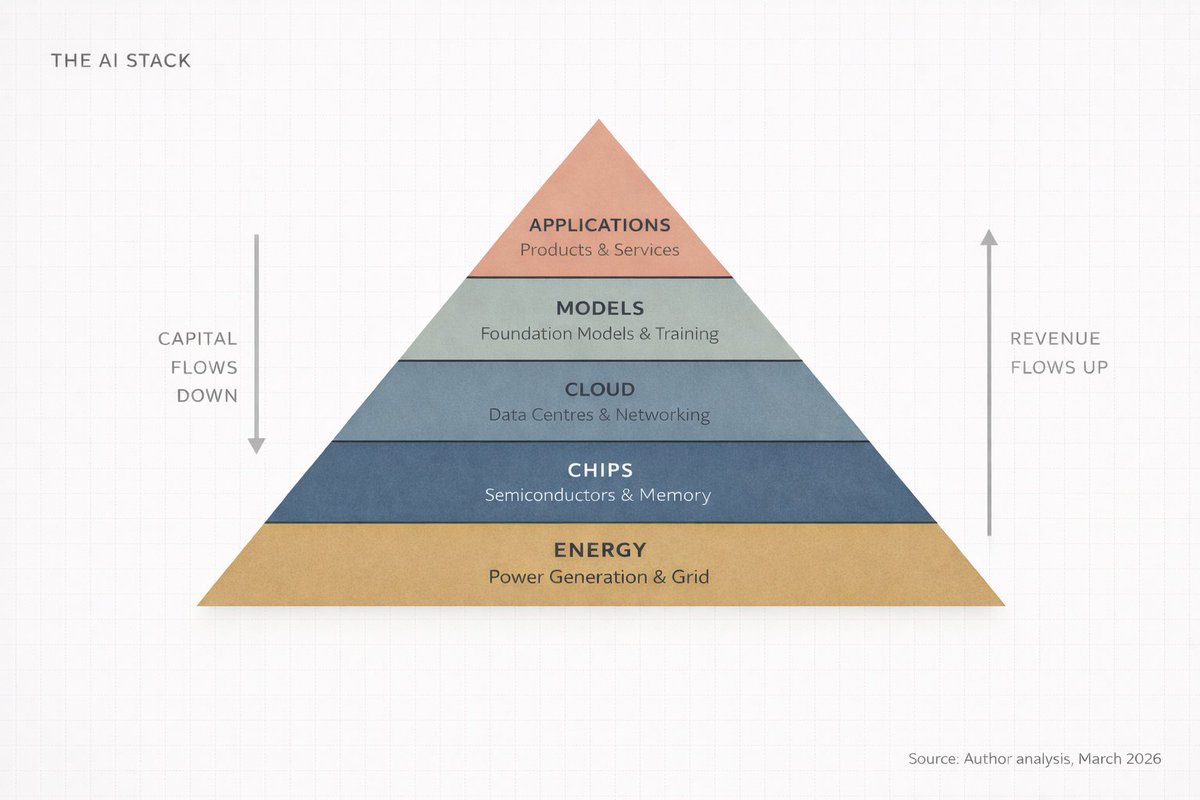

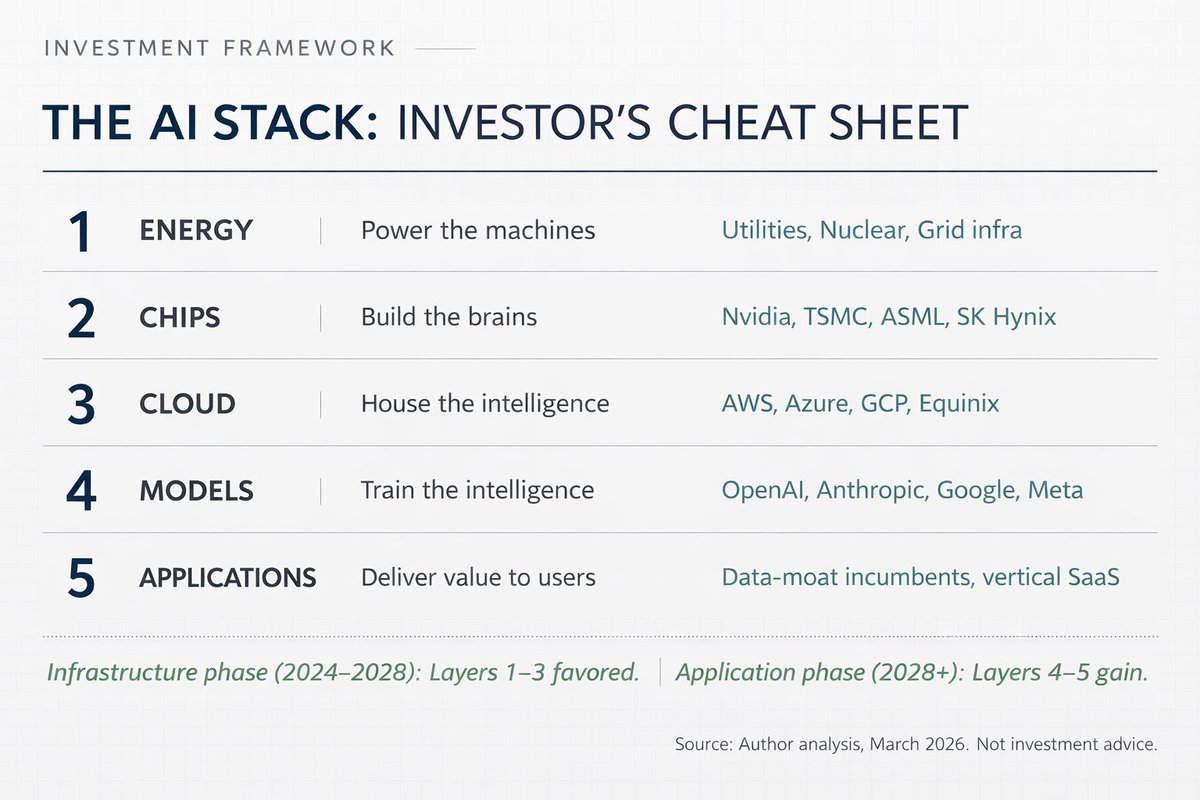

Jensen Huang, CEO of Nvidia, described AI at Davos in January 2026 as a five-layer system. Energy. Chips. Cloud. Models. Applications. He called the whole thing "the largest infrastructure buildout in human history."

Think about that word for a second. Infrastructure. Roads. Power grids. Water systems. These are the things that make civilisation work, and nobody thinks about them until they break. AI is becoming that kind of thing. Invisible, essential, and enormously expensive to build.

I call this the AI Stack. Five layers, stacked on top of each other, where each layer feeds the one above it, and the money flows both ways.

Here's the simplest version I can give you:

-

Energy. You need electricity to power the computers. Lots of it.

-

Chips. You need specialised processors to do the math. These are not your laptop's brain.

-

Cloud. You need massive warehouses full of these chips, connected by insanely fast networks.

-

Models. You need the actual AI software, the "brain" that learns patterns from data.

-

Applications. You need products people actually use. ChatGPT. Google Search. Your bank's fraud detector.

Every conversation about AI that focuses only on Layer 5 is missing 80% of the picture.

And here's the part that matters if you're an investor, a founder, or just someone trying to understand where the world is going: the money doesn't flow evenly across these layers. It concentrates. It compounds. And right now, it's concentrating in places most people aren't looking.

II - Follow the money (it's not where you think)

Everyone's mind goes to the application layer. ChatGPT. Copilot. Claude. Perplexity. These are the products you touch, so they feel like the whole story.

But here's what everyone misses.

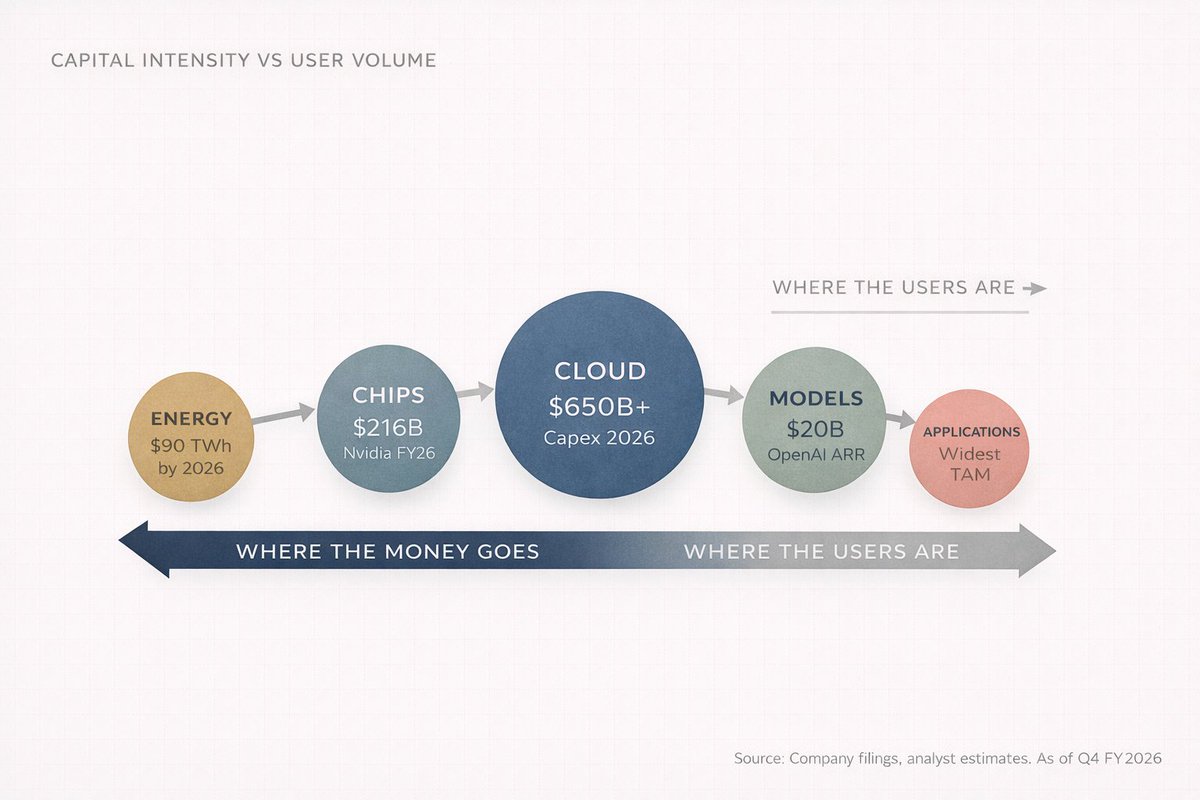

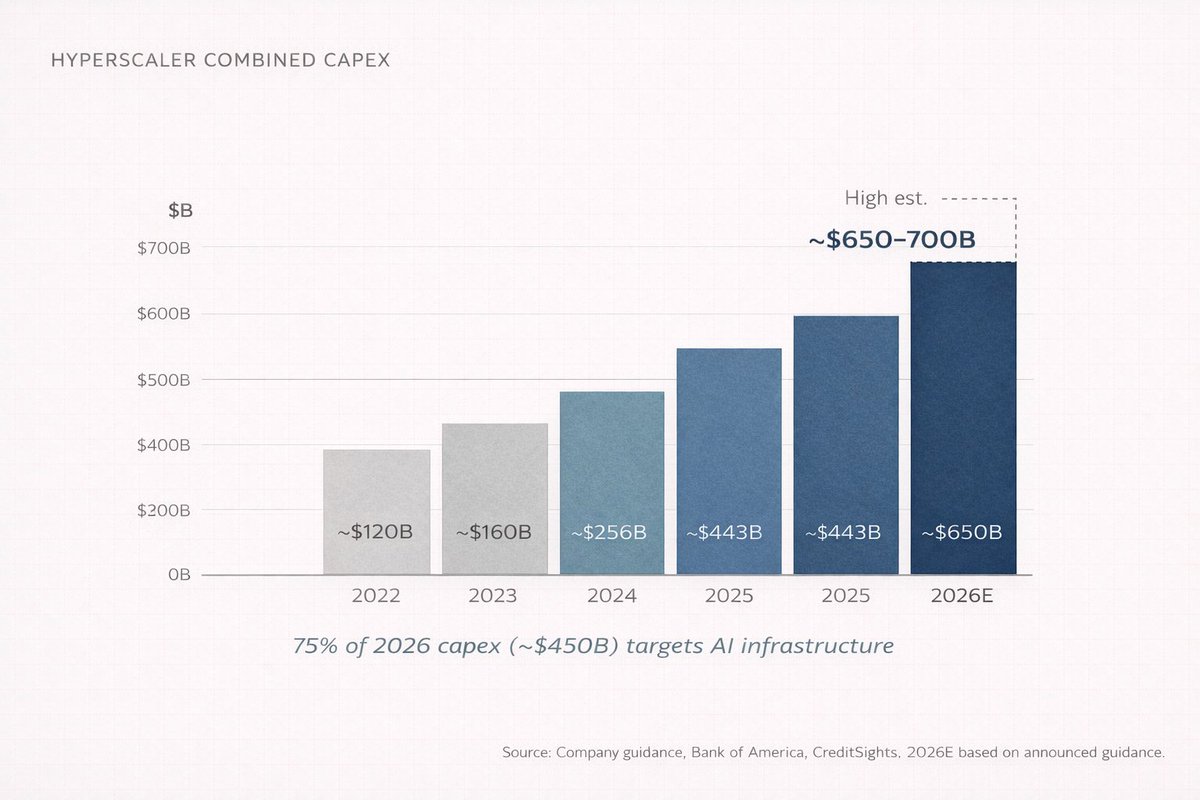

In 2026, the four biggest cloud companies (Amazon, Microsoft, Google, and Meta) are on track to spend somewhere between $650 billion and $700 billion on capital expenditures. Combined. In a single year. That is roughly equal to Switzerland's GDP. And almost 75% of it, about $450 billion, is going directly into AI infrastructure.

Not into chatbots. Not into apps. Into buildings, chips, cables, and cooling systems.

Nobody talks about this stuff at cocktail parties. That's how you know it's where the money is.

Because think about it. Before anyone can use ChatGPT, someone has to build a data centre the size of a shopping mall, fill it with tens of thousands of specialised processors, connect them with networking equipment that costs more than most companies are worth, and then feed the whole thing enough electricity to power a small city. Every single day.

That's Layer 1 through Layer 3. The invisible layers. The layers where the serious capital is being deployed.

"But what about OpenAI? Aren't they making billions?"

They are. OpenAI hit $20 billion in annualised recurring revenue by the end of 2025, up from $6 billion a year earlier and $2 billion the year before that. That's 10x growth in two years. No company in history has scaled revenue that fast from that base.

But here's the catch. OpenAI burned roughly $9 billion in cash in 2025 and projects $17 billion in cash burn for 2026. Their inference costs (the cost of actually running the AI when you ask it a question) hit $8.4 billion in 2025 and are projected to reach $14.1 billion in 2026. They don't expect to turn cash-flow positive until 2029 or 2030.

So where does that burned cash go?

It flows downward through the stack. To Microsoft Azure (OpenAI pays Microsoft 20% of its total revenue through 2032). To Nvidia for chips. To the companies building and equipping data centres. To the power companies generating electricity.

There's something almost circular about it if you stare at it long enough. Microsoft invests in OpenAI. OpenAI spends that money on Azure. Azure uses the revenue to buy more Nvidia chips. NVIDIA reports record earnings. Everyone celebrates. And the cash keeps flowing downhill.

Most of the users are at the top of the stack. Most of the profit is at the bottom. That disconnect is the entire thesis.

This is the first lesson of the AI value chain: revenue flows up, capital flows down.

III - You've seen this movie before

All of humanity's problems are engineering problems, and engineering problems can be solved. ~ Buckminster Fuller

If you want to understand what's happening with AI, study what happened with electricity between 1880 and 1920.

When Thomas Edison built the first commercial power station in 1882 on Pearl Street in Manhattan, people thought electricity was a novelty. A fancy way to light a room. Why would anyone need this when gas lamps worked perfectly well?

Within 40 years, electricity had reorganised every industry on earth. Manufacturing. Transportation. Communication. Medicine. Entertainment. The companies that won weren't the ones that invented the lightbulb. They were the ones who built the power plants, laid the copper wire, and manufactured the generators.

General Electric. Westinghouse. The utility companies. The copper miners. The builders.

The same pattern is playing out with AI, just compressed into years instead of decades.

AI → data centres → chips → raw materials → energy

Electricity → factories → machines → raw materials → coal/water

The arrow progression is almost identical. And the winners, again, are not primarily at the application layer. They're at the infrastructure layer.

I call this Infrastructure Gravity. Every time a new computing platform emerges, initial wealth creation happens in the picks and shovels. The applications come later. The applications get all the press. But the infrastructure gets all the margin.

NVIDIA posted $215.9 billion in full-year revenue for fiscal 2026 (ending January 2026), up 65% from the prior year. Their data centre segment alone did $62.3 billion in the final quarter, growing 75% year-over-year. That single segment now represents over 91% of Nvidia's total revenue. Think about that: a company doing $68 billion in a quarter, and nine out of ten of those dollars come from one business line.

TSMC, the company that physically manufactures Nvidia's chips (and nearly everyone else's), captured almost 70% of the global foundry market in 2025, with $122.5 billion in sales. Samsung, the nearest competitor, had 7.2%. That is dominance at a level that would make Standard Oil uncomfortable.

The infrastructure always wins first. The question is how long the window stays open.

Ask anyone what the internet revolution was about, and they'll say Google, Amazon, and Facebook. Ask where the early money was actually made, and the answer is Cisco, Corning, and the companies laying fibre. Same story. Different decade.

IV - The part nobody wants to hear

The stock market is a device for transferring money from the impatient to the patient. ~ Charlie Munger

I'll be honest about something. When I first started paying attention to AI as an investor, I made the same mistake most people make.

I looked at the application layer. I saw ChatGPT growing. I saw Anthropic raising billions. I thought: the AI companies will win, so invest in AI companies.

Three things changed my mind. And they happened in sequence, which is important because each one built on the last.

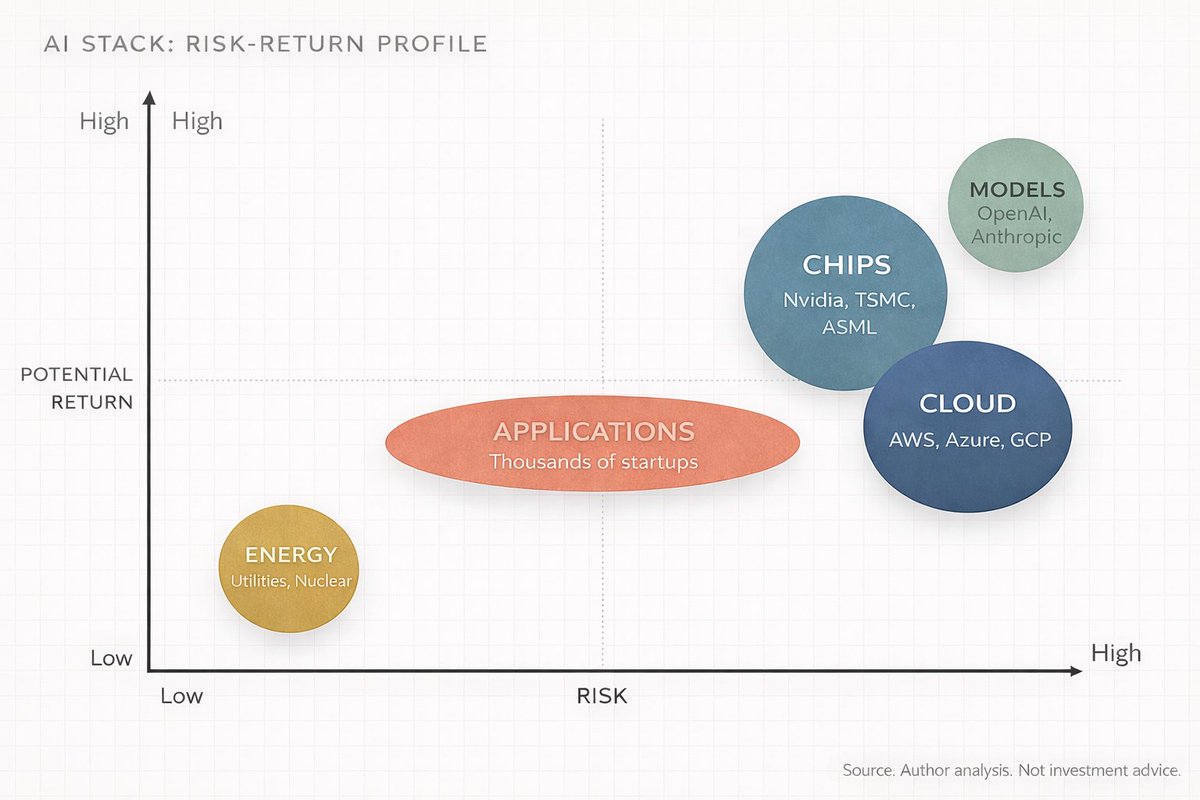

First, I noticed that nearly every "AI company" was hemorrhaging cash. OpenAI, Anthropic, Mistral, xAI. All are burning faster than they earn. Not because they're bad businesses, but because the computing costs are structural. Every time you ask an AI model a question, it costs real money to generate that answer. And the smarter the model gets, the more compute it needs, which means it costs more to run.

The companies you think of as "AI winners" are actually the ones spending the most.

Second, I realised that the infrastructure companies were printing money at margins I hadn't seen since the early days of Google. NVIDIA's gross margins were hovering around 75%. TSMC was expanding capacity and raising prices simultaneously because demand so dramatically exceeded supply. These companies don't have a "when will we monetise" problem. They have a "we literally cannot build fast enough" problem. Those are very different problems to have.

Third, and this was the uncomfortable one, I realised I'd been thinking about AI like a consumer rather than an engineer. The consumer sees the app. The engineer sees the stack.

Once you see the stack, you can't unsee it.

Every AI announcement becomes a capex announcement. Every model improvement becomes a chip order. Every new feature becomes a data centre lease. The whole industry starts looking like a series of concentric circles, and the further toward the centre you go, the more concentrated the profits become.

So, maybe you're a software engineer who's been following AI models. Maybe you're a retail investor who bought Nvidia at $300 and is trying to figure out what's next. Maybe you're someone in India watching this entire revolution from a distance, wondering how any of it connects to your portfolio.

(Or maybe you're all three, which is the most interesting position to be in.)

Wherever you are, the principle is the same. The consumer sees the product. The investor sees the supply chain. The best investors see the supply chain before the product even ships.

Of course, this all sounds neat and tidy in hindsight. It wasn't. I spent weeks going back and forth on this. I had to unlearn a lot of pattern-matching from the SaaS era, where most of the value accrued at the application layer. I kept wanting to find the "next OpenAI" when I should have been looking at who OpenAI was writing cheques to. AI is structurally different from SaaS. The compute requirements are so massive that the infrastructure layer captures more value, at least in this phase of the cycle.

Understanding the stack changes how you read every headline. It changes how you evaluate every company. It changes how you allocate capital.

I'm going to be writing a lot more like this. Deep dives into investing, AI, and the systems behind how wealth actually moves. If you don't want to rely on the algorithm to show you the next one, the best move is to follow and turn on notifications.

V - The investor's map: a layer-by-layer breakdown

Okay, this is getting long, so I'm going to speed things up. Here's the exact breakdown of each layer of the AI Stack, what happens there, who the important players are, and where the investment opportunities sit.

Stick with me.

Layer 1: Energy

AI data centres are extraordinarily power-hungry. A single large AI training run can consume as much electricity as a small town uses in a year. These facilities are projected to consume around 90 terawatt-hours of electricity annually by 2026, roughly a tenfold increase from 2022 levels.

This creates a straightforward investment thesis: whoever can generate, transmit, and deliver reliable power to data centres will benefit. Nuclear, natural gas, and renewable energy companies near major data centre clusters. Utility companies with excess capacity. Companies are building grid infrastructure.

Jensen Huang said it plainly in October 2025: "Data centre self-generated power could move a lot faster than putting it on the grid." Companies are already building dedicated power generation attached directly to their data centres, bypassing the grid entirely. That part surprised me. These tech companies are basically becoming their own utility providers.

Who benefits: utility companies (especially those with nuclear capacity), independent power producers, companies manufacturing transformers, switchgear, and other electrical infrastructure. In India, companies in the power equipment and transmission space stand to benefit as hyperscaler campuses expand across Asia.

Layer 2: Chips

This is the layer most people have heard of, because of Nvidia. But it's more complex than one company.

The chip layer has its own sub-layers, each with a different competitive structure. At the top, you have the designers, the companies that architect the chips: Nvidia (GPUs), AMD (GPUs and CPUs), Broadcom (custom ASICs), Qualcomm, and increasingly the hyperscalers themselves (Google's TPUs, Amazon's Trainium, Microsoft's Maia). Then you have the manufacturers. TSMC dominates fabrication, with nearly 70% of the global foundry market share. Samsung is a distant second at 7.2%. Intel is trying to rebuild its foundry business, but that's a multi-year project with no guaranteed outcome.

Then there's the equipment layer, the companies that make the machines that make the chips. ASML is the only company on earth that makes the extreme ultraviolet lithography machines required for the most advanced chips. Applied Materials, Lam Research, and Tokyo Electron sit alongside them. Below that, you've got memory (AI models need enormous amounts of high-bandwidth memory, and SK Hynix, Samsung, and Micron are the three players that matter) and packaging (advanced chip packaging like TSMC's CoWoS technology has become a genuine bottleneck).

The concentration here is something I keep coming back to. Nvidia holds an estimated 92% share of the AI data centre GPU market. TSMC manufactures chips for Nvidia, AMD, Broadcom, Qualcomm, Apple, and nearly every other major chip designer. ASML is literally the only supplier of EUV lithography machines on the planet.

One company designs. One company builds. One company makes the machine that builds. That level of concentration is both an investment thesis and a geopolitical risk. And I don't think enough people sit with both of those ideas at the same time.

Layer 3: Cloud and data centres

This is where the chips live. Massive warehouse-scale facilities packed with servers, connected by high-speed networking, and kept cool by increasingly elaborate thermal management systems (liquid cooling is becoming standard, not a nice-to-have).

The market is dominated by three hyperscalers: Amazon Web Services (31% market share), Microsoft Azure (24%), and Google Cloud (11%). Oracle is aggressively growing in this space too, with a $50 billion capex target for 2026.

But the cloud layer goes much deeper than the hyperscalers. Foxconn (Hon Hai) now assembles about 40% of the world's AI servers. Arista Networks and Credo Technology (whose stock rose 117% in 2025 on energy-efficient data transfer) build the networking infrastructure that connects everything. Vertiv handles liquid cooling. Data centre REITs like Equinix and Digital Realty own the land and buildings. Someone even has to pour the concrete. Every layer has its own supply chain.

The hyperscalers are spending 90% of their operating cash flow on capex in 2026, according to Bank of America estimates. That's up from 65% in 2025. Morgan Stanley expects these companies to borrow over $400 billion this year to fund the buildout, more than double 2025's $165 billion. That number stopped me in my tracks when I first read it. $400 billion in debt issuance in a single year, just to build computer warehouses.

Layer 4: Models

This is the "brain" layer. The companies that train and build the actual AI models.

The big names: OpenAI (GPT series, $20B+ ARR), Anthropic (Claude, reportedly around $19B annualised revenue by early 2026), Google DeepMind (Gemini), Meta AI (Llama, open source), Mistral, and xAI (Elon Musk's company, building Grok).

This layer fascinates me because it's simultaneously the most hyped and the most unprofitable. OpenAI's revenue is growing at a pace we've never seen, but it's burning $17 billion in cash in 2026. Anthropic is growing fast but is heavily reliant on massive funding rounds ($5B at a reported $170B valuation in early 2026).

The business model problem is structural: models get better when you spend more on compute, but that spending grows faster than revenue. It's a bit like running a restaurant where every dish requires more expensive ingredients than the last, but customers expect the price to stay the same. The margins stay compressed. I don't know when that changes. Maybe it doesn't.

For investors, this layer is high risk, high potential reward. Most of these companies are private. Your public market exposure comes through the cloud providers who host them (Microsoft owns a large stake in OpenAI and runs its compute on Azure) and through the chip companies whose products get consumed during training.

Layer 5: Applications

This is the layer you see every day. ChatGPT. Google Search powered by Gemini. Microsoft Copilot in Office. AI-powered fraud detection at your bank. Netflix recommendations. Your phone's photo enhancement.

The application layer is the widest and most crowded. Thousands of startups and incumbents are building here. It will eventually be the largest layer by total addressable market (some estimates put it above $2 trillion by the early 2030s), but right now it's also the layer with the thinnest margins and the greatest uncertainty about who will win.

The differentiator at this layer is data. Companies with unique, proprietary data will build durable advantages. Salesforce has enterprise CRM data. Bloomberg has financial data. Epic has healthcare records. Companies sitting on that kind of proprietary data moat can fine-tune AI models in ways that a generic chatbot cannot.

For investors, the application layer is where the biggest upside eventually lives, but also where most of the capital will be destroyed. Most AI startups will fail. The ones that survive will compound aggressively.

The best returns over the next 3 to 5 years probably look like this: infrastructure now, applications later. The smartest capital is already positioned accordingly.

The companies that will actually win at Layer 5 are the ones sitting on data nobody else can get. And most of them don't even call themselves AI companies yet.

VI - "But isn't this just a bubble?"

The investor's chief problem, and even his worst enemy, is likely to be himself. ~ Benjamin Graham

Let me address the elephant directly.

"But what about the dot-com bust? Isn't this the same thing? Massive infrastructure spending, no profits, everyone caught up in hype?"

Fair question. It deserves a serious answer.

Here's the difference. During the dot-com era, companies were spending on infrastructure for demand that hadn't yet materialized. They were building fibre-optic networks and web servers for an internet audience that was still on dial-up. The infrastructure was built, demand didn't materialize for another 5 to 7 years, and everything in between got liquidated.

By 2026, AI demand is already here. NVIDIA can't make chips fast enough. TSMC's advanced packaging capacity is sold out. Cloud computing rental prices are rising, not falling. OpenAI added 400 million weekly active users between March and October 2025 alone. The models are being used. The compute is being consumed. The customers are paying.

That doesn't mean there's no risk. There is an enormous risk. And I think about it more than I'd like to admit. Three things in particular:

First, capital misallocation. Companies are spending $650 billion+ on data centres in 2026. If the revenue from AI services doesn't materialise fast enough to justify that spend, some of these companies will face serious margin compression. Amazon's free cash flow may actually go negative this year. That's Amazon. The company that basically invented cloud computing.

Second, concentration risk. The AI supply chain is dangerously concentrated. TSMC fabricates nearly 70% of the world's chips. ASML is the sole supplier of EUV machines. NVIDIA designs 92% of AI data centre GPUs. Any disruption (geopolitical, natural disaster, or competitive) could ripple through the entire stack. A single earthquake in Hsinchu, Taiwan, could set back global AI development by years. That thought should make you uncomfortable.

Third, the DeepSeek question. In January 2025, Chinese AI lab DeepSeek released a model that approached frontier performance at a fraction of the training cost. This challenged the assumption that greater spend automatically equates to better AI. If open-source and efficient models continue to close the gap, the infrastructure spending thesis weakens. I don't think DeepSeek killed the thesis. But it introduced a variable that wasn't there before, and variables like that don't just go away.

But here's the frame I keep coming back to. McKinsey estimates that cumulative data centre investment could reach $6.7 trillion globally by 2030. PwC estimates AI could contribute $15.7 trillion to global GDP by 2030. IDC projects AI solutions and services will generate $22.3 trillion in cumulative impact by 2030.

Even if those numbers are 50% wrong, we're still talking about the largest technology-driven economic shift since the internet. The question is about magnitude, not direction.

I keep hearing people say "I'm sceptical about AI," as if it's a position. Fine. Be sceptical about the models. Be sceptical about the timelines. But don't be ignorant about the supply chain. Those are different things. One is a healthy intellectual posture. The other will cost you money.

Five years from now, the names that won this cycle will feel obvious. They always do. The game right now is seeing the structure before everyone else catches up.

VII - Play the game at the right layer

Think about AI as a video game with five levels, stacked on top of each other.

Level 1 (Energy) is the tutorial stage. Essential, unglamorous, and almost impossible to lose if you play it. Low risk, steady returns. Think of these as the quest givers who never die but always pay.

Level 2 (Chips) is the boss fight. Highest concentration of power, highest margins, but also the layer most exposed to disruption and geopolitical risk. The rewards are massive, but the difficulty is set to hard.

Level 3 (Cloud) is the multiplayer server. Everyone plays here. The hyperscalers are the server administrators. They take a cut of everything.

Level 4 (Models) is the PvP arena. Brutal competition, rapid innovation, and most players get eliminated. Only the best-equipped survive.

Level 5 (Applications) is the open world. Infinite possibilities, but no guaranteed loot. You have to find your own quests.

The meta-strategy is simple. You don't have to play all five levels. Most people try to play Level 5 because it's the most visible. The smart money is farming Level 2 and Level 3 because that's where the XP is highest right now.

Where you are in the stack determines what you should focus on.

For the non-technical: you don't need to understand how a GPU works. You need to understand that someone has to make them, someone has to house them, and someone has to power them. And those "someones" are publicly traded companies with quarterly earnings you can read.

For the technical, you already know the models are getting better. What you might be underestimating is how fast the physical constraints (power, cooling, chip packaging) are becoming the binding bottleneck. The next decade of AI progress will be won or lost in engineering, not in architecture papers.

For the investor, the AI value chain is five trades stacked on top of each other, each with a different risk-reward profile, different time horizon, and different set of winners. Treating "AI" as a single sector is like treating "technology" as a single sector in 1998. The spread between the best and worst outcomes within "AI" is enormous.

This won't last forever. At some point, the infrastructure buildout will mature. The application layer will consolidate. And the value will shift upward in the stack, just like it did with the internet. Amazon, Google, and Facebook (the application layer of the internet era) eventually captured more value than the fiber-optic cable companies and server manufacturers.

But we're not there yet with AI. We're in the infrastructure phase. The picks-and-shovels phase. And the picks and shovels are printing money.

The people who understand the full stack will see the transitions before they happen. Everyone else will be surprised, over and over, by where the money actually goes.

In 10 years, understanding the AI stack will be as basic as understanding a balance sheet.

Learn the stack. Map the layers. Follow the capital.

That's the game.

~ Anish Moonka

Disclaimer:

-

This article is reprinted from [AnishA_Moonka]. All copyrights belong to the original author [AnishA_Moonka]. If there are objections to this reprint, please contact the Gate Learn team, and they will handle it promptly.

-

Liability Disclaimer: The views and opinions expressed in this article are solely those of the author and do not constitute any investment advice.

-

Translations of the article into other languages are done by the Gate Learn team. Unless mentioned, copying, distributing, or plagiarizing the translated articles is prohibited.

Related Articles

Arweave: Capturing Market Opportunity with AO Computer

The Upcoming AO Token: Potentially the Ultimate Solution for On-Chain AI Agents

What is AIXBT by Virtuals? All You Need to Know About AIXBT

AI Agents in DeFi: Redefining Crypto as We Know It

AI+Crypto Landscape Explained: 7 Major Tracks & Over 60+ Projects