Tether Launches New AI Framework: Billion-Parameter Models Trainable on Mobile as Decentralized AI Era Accelerates

Event Overview: Tether Moves Into the AI Infrastructure Sector

Image source: Official Tether Announcement

Image source: Official Tether Announcement

The convergence of AI and the crypto industry is accelerating. In this context, Tether is evolving from a traditional stablecoin issuer to a cross-industry technology player.

The recently launched QVAC Fabric AI framework marks Tether’s official entry into the AI infrastructure space. Its core feature: enabling consumer devices such as smartphones to train AI models with up to a billion parameters.

According to public sources, its performance is as follows:

-

100 million parameter model: Training completes in a few minutes

-

1 billion parameter model: About 1–2 hours

-

Maximum supported size: Scalable up to 13 billion parameters

This capability significantly lowers the barriers to AI development, making local training of large models possible.

Strategically, this represents a major step by Tether in the AI and computing power sectors, signaling its expansion beyond financial infrastructure into a composite ecosystem of “data + computing power + AI.”

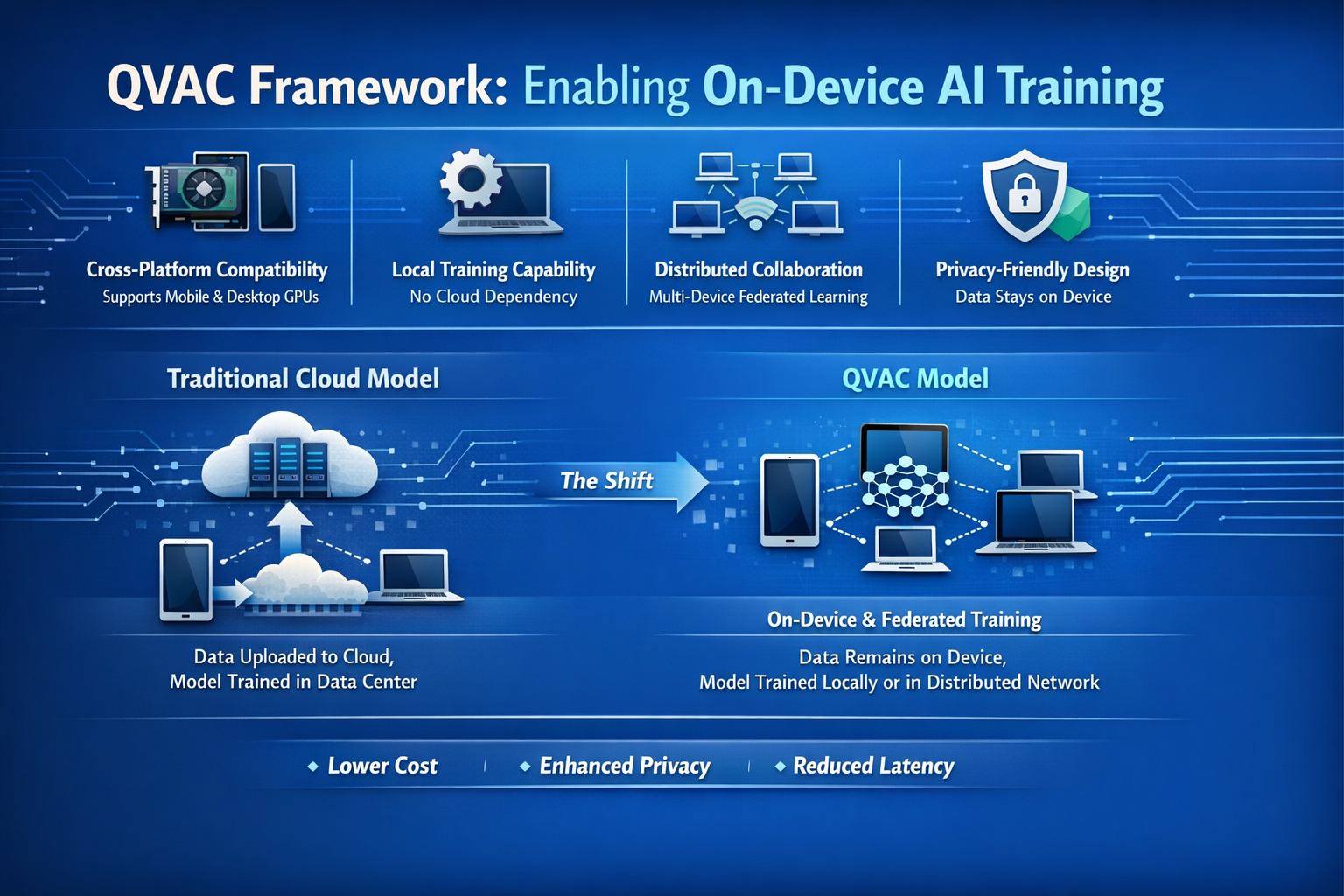

QVAC Framework Analysis: How On-Device Training Is Achieved

QVAC’s primary goal is to move AI training from the cloud to end devices, enabling true “on-device AI.”

Its architecture offers several key features:

-

Cross-platform compatibility: Supports multiple chip architectures, including mobile and desktop GPUs

-

Local training capability: Eliminates reliance on cloud computing resources

-

Distributed collaboration: Enables collaborative training across multiple devices

-

Privacy-friendly design: Allows data to remain on the local device

This architecture fundamentally changes how AI operates:

Traditional model: Data is uploaded to the cloud, and models are trained in data centers.

QVAC model: Data stays on the device, and models are trained locally or across distributed networks.

This shift not only reduces costs but also provides significant advantages in privacy protection and latency control.

Core Technology Breakdown: Combining BitNet and LoRA

QVAC’s breakthrough is built on the integration of two key technologies.

-

BitNet: Ultra-Low Precision Model Architecture

BitNet is a low-bit quantization model that uses 1-bit or ternary weights to represent parameters, dramatically reducing model complexity.

Key advantages:

-

Substantial reduction in memory usage (up to 70% or more)

-

Significant boost in inference efficiency

-

Optimized for mobile device deployment

Essentially, this technology accepts some loss of precision in exchange for much greater computational efficiency.

-

LoRA: Cost-Effective Fine-Tuning Mechanism

LoRA (Low-Rank Adaptation) is a leading solution for fine-tuning large models. The core approach is to:

-

Freeze the original model parameters

-

Train only a small number of additional parameters

Key advantages:

-

Dramatically reduced computational costs

-

Much faster training

-

Ideal for rapid iteration

-

The Power of Combining Technologies

The BitNet + LoRA combination creates a highly efficient structure:

-

BitNet compresses the model size

-

LoRA lowers training costs

Together, they make it possible to train large-scale models on smartphones.

Performance and Test Data: Real-World Smartphone Training Results

Test data shows QVAC’s performance across various model sizes:

-

125M model: Around 10 minutes

-

1B model: About 1 hour

-

3B–4B models: Can run on high-end smartphones

-

13B model: Training completed on certain devices

In inference, mobile GPUs outperform CPUs by 2–10x, with a significant drop in memory usage.

These results indicate that end-user devices are now capable of handling medium-scale AI models. (Note: “Training” here mainly refers to fine-tuning, not full model training from scratch.)

Industry Context: Structural Shifts in AI Computing Power

The AI industry is undergoing fundamental structural changes:

-

Computing power costs are rising: Training large models requires GPU clusters, which are expensive and present high entry barriers.

-

Computing resources are highly concentrated: Most are controlled by a handful of tech giants, creating a “computing power monopoly.”

-

The crypto industry is seeking new narratives: As market cycles evolve, the industry is looking to new growth areas—AI, DePIN (Decentralized Physical Infrastructure), and distributed computing networks.

In this context, QVAC provides a practical foundation for distributed computing networks.

Decentralized AI: Pathways from Cloud to Edge

The deeper impact of the QVAC framework is in advancing decentralized AI.

-

Edge Computing as a Core Node

Future AI networks may be built from vast numbers of end devices:

-

Smartphones

-

PCs

-

IoT devices

These devices serve as both data sources and computing power providers.

-

The Rise of Federated Learning

QVAC supports federated learning:

-

Data never leaves the device

-

Models are trained through parameter sharing

This is especially critical for privacy-sensitive sectors.

-

Decentralized Computing Networks

Combined with blockchain mechanisms, this could enable:

-

Users providing computing power and earning rewards

-

Model training tasks distributed across the network

-

AI becoming a tradable service

This vision aligns closely with the current DePIN narrative.

Business Models and Ecosystem: Who Benefits?

QVAC’s implementation will impact multiple stakeholders:

-

Developers: Lower development costs, no need for cloud resources, more flexible model deployment

-

Users: Greater data privacy, the ability to participate in AI training, and potential to earn rewards

-

Hardware manufacturers: Enhanced value for smartphones and end devices, with AI as a new selling point

-

Crypto projects: The opportunity to build distributed AI networks and innovate token economic models

Risks and Challenges: Bridging Technology and Reality

Despite the promising outlook, several real-world challenges remain:

-

Performance limitations: Smartphone computing power still lags far behind data centers; complex tasks still require the cloud.

-

Energy consumption and device wear: Extended training can cause overheating and battery degradation.

-

Immature ecosystem: Development tools and application scenarios are still in early stages.

-

Security concerns: Local models are more vulnerable to tampering, and distributed training faces attack risks.

-

Incomplete business loop: Incentivizing users to provide computing power remains an open question.

Future Trends: Reshaping AI Production Dynamics

QVAC could be ushering the AI industry into a new era of production dynamics.

-

AI training is becoming democratized—shifting from a system dominated by a few tech giants to an open model where developers and even individuals can participate.

-

The structure of computing power is evolving from centralized data centers to distributed networks of countless end devices.

-

The nature of AI models may change, transforming from simple software tools into economic “assets” that can be traded, integrated as foundational components in various applications, and even become part of the Web3 economy.

These changes are expected to redefine AI’s production function, driving down costs, expanding participation, and accelerating innovation—pushing the industry into a more open and efficient phase.

Conclusion

Tether’s QVAC AI framework is not only a technological innovation but also a new experiment in AI infrastructure.

As “training billion-parameter models on smartphones” becomes reality, the boundaries of AI are being redefined:

-

From cloud to end device

-

From centralized to distributed

-

From closed to open

This trend could mark a key starting point for the integration of AI and Web3 in the future.

Related Articles

In-depth Explanation of Yala: Building a Modular DeFi Yield Aggregator with $YU Stablecoin as a Medium

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape