Grammarly's AI expert review sparks controversy! Academia criticizes: unauthorized and deliberately imitating deceased scholars

Grammarly Launches “Expert Review” Using AI to Simulate Scholar Comments, Sparking Controversy Over Unauthorized Use of Names and Academic Ethics

Grammarly’s AI “Expert Review” Feature Sparks Academic Ethics Debate

Artificial intelligence tools continue to penetrate writing and research industries, but the latest “AI simulated expert commentary” feature has ignited intense discussions about ethics and authorization within academia. The well-known writing tool Grammarly recently introduced an AI feature called “Expert Review,” allowing users to, while writing documents, simulate the perspective of specific scholars or critics to provide suggestions and comments.

This feature analyzes the text the user is working on and automatically recommends relevant “experts” based on the topic, then generates comments using large language models. For example:

- In media critique topics, the system might emulate the style of former New York Times public editor Margaret Sullivan or former Politico media writer Jack Shafer to help refine the article.

- In legal and internet governance issues, it might mimic Harvard Law Professor Lawrence Lessig; in tech ethics or data privacy topics, it could reference AI ethics researcher Timnit Gebru or Cornell Tech Professor Helen Nissenbaum.

Grammarly states that these are not actual reviews by these experts but are generated based on their publicly available research and writings, “inspired by their ideas.” The company notes that the system selects influential scholars or critics related to the article’s topic and prompts users to explore their work further.

However, this approach has sparked strong criticism in academia, especially as some scholars have discovered their names being used for AI commentary roles without prior authorization or collaboration invitations.

Scholars Criticize Unauthorized Use of Names and Simulation of Deceased Experts

One of the most controversial points is that AI tools may simulate the commentary style of deceased scholars. Some academics argue this practice is akin to “resurrecting scholars” through AI, using their names to evaluate articles.

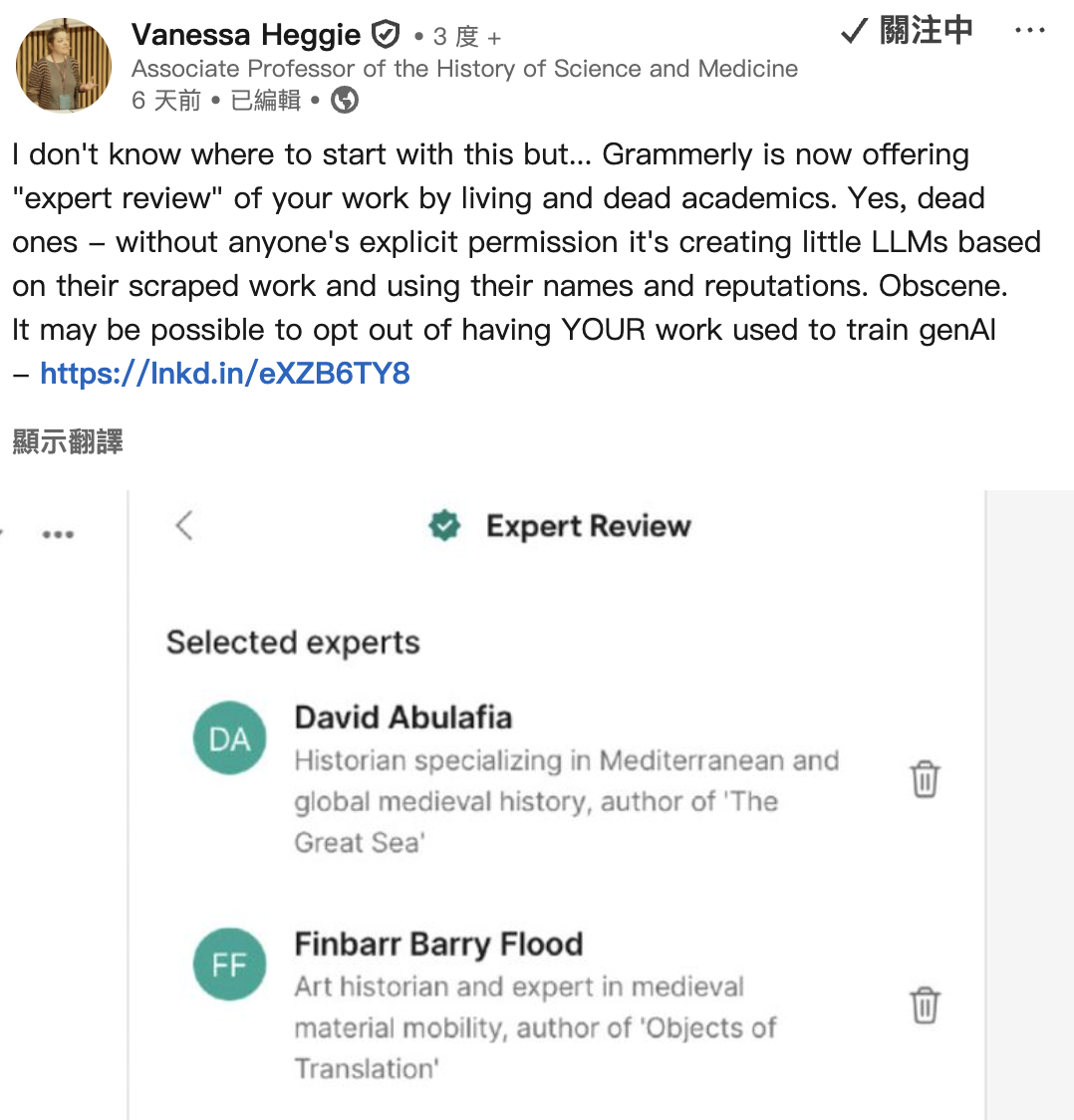

Vanessa Heggie, a history professor at the University of Birmingham in the UK, publicly criticized this on LinkedIn, pointing out that Grammarly is using scholars’ research outputs to build language models and employing their names and reputations as AI review personas. She questioned whether these scholars had authorized the company to use their work for training models or to provide “AI peer review” under their names.

Image source: LinkedIn UK Birmingham University History Professor Vanessa Heggie publicly criticizes this feature on LinkedIn

Heggie stated that many scholars, even those who have passed away, are being used as review personas by AI tools. She believes that building AI models with scholars’ research and academic reputation without consent is unacceptable.

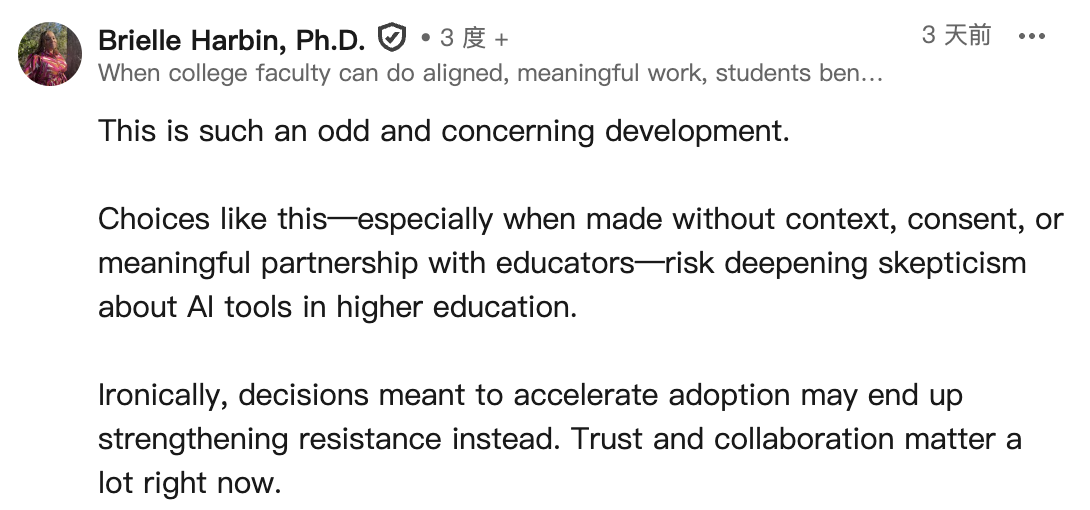

Another academic, former US Naval Academy political science associate professor Brielle Harbin, also expressed concern. She pointed out that without any background explanation, authorization, or collaboration, AI companies directly create review models using scholars’ names, which could deepen distrust in AI tools within the educational community.

Image source: LinkedIn Former US Naval Academy Political Science Associate Professor Brielle Harbin also voiced concerns

Harbin believes that higher education institutions are still cautious about AI tools. If tech companies ignore academic collaboration and ethical considerations when designing products, efforts to accelerate AI adoption could instead provoke greater resistance.

Grammarly Transforms into an AI Platform, Moving from Writing Assistant to Productivity Agent

Founded in 2009, Grammarly originally positioned itself as an AI grammar and writing assistance tool. With the rapid development of generative AI technology, the company has gradually shifted its product from a single writing helper to a multifunctional AI productivity platform. In October 2025, Grammarly’s parent company announced a rebranding, positioning itself as “Superhuman,” symbolizing that its products are no longer just grammar checkers but a suite of AI work agents.

The product lineup now includes various applications such as research assistance, email drafting, scheduling, and workflow automation. Expert Review is one of the newly launched AI agent services. According to official statements, Grammarly aims for Expert Review to help students, researchers, and professionals improve their writing quality. For example, when users draft marketing proposals, academic reports, or business documents, the system analyzes the content and offers comments simulating expert opinions.

The company emphasizes that this feature does not claim that these experts actually participated in the review nor that they endorse the content; rather, it organizes relevant scholars’ research findings through large language models to provide suggestions.

However, this “simulated expert perspective” approach has been criticized for potentially misleading users into believing these comments are directly associated with the scholars themselves.

AI Imitation of Celebrities Becomes a New Trend in Tech Industry

Grammarly is not the only company attempting to imitate scholars or celebrities with AI. In recent years, many tech firms have launched similar products, hoping to enhance AI interaction experiences by leveraging well-known personalities.

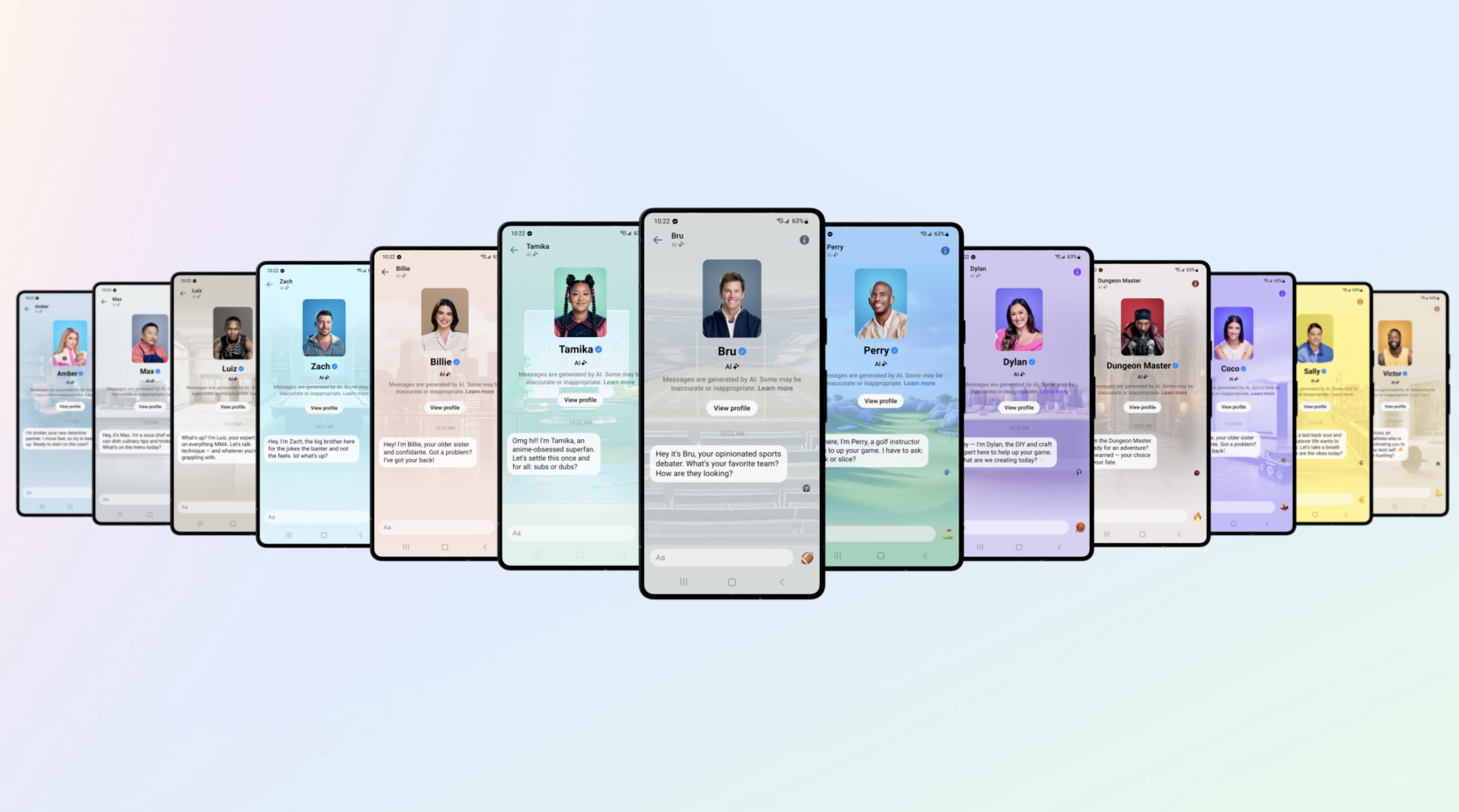

In 2023, Meta released a series of celebrity chatbots on its Meta AI platform, including AI personas modeled after rapper Snoop Dogg, NFL star Tom Brady, model Kendall Jenner, and tennis player Naomi Osaka. These AI characters interact using the celebrities’ images and speech styles, attracting users to try AI chat services.

Image source: Meta Meta launched a series of celebrity chatbots on its Meta AI platform

The education sector has also adopted similar models. The online education platform Khan Academy introduced its AI teaching assistant “Khanmigo,” which allows students to role-play as historical figures. For example, students can simulate conversations with Winston Churchill or Harriet Tubman to better understand historical backgrounds and ideas.

However, as AI models increasingly mimic individual styles, discussions about authorization and personality rights have intensified. Some experts warn that when AI systems provide opinions under the identities of scholars, writers, or celebrities, it may influence public perception of those individuals’ viewpoints. Academics suggest that future use of scholars’ or celebrities’ ideas and images by AI companies should involve clearer licensing mechanisms and transparency. Otherwise, even if technically just “inspired,” such practices could still raise issues related to reputation, intellectual property, and academic ethics.